CUBRID Disk Manager and File Manager — Volumes, Sectors, Files, Page Allocation, and Extension

Contents:

- Theoretical Background

- Common DBMS Design

- CUBRID’s Approach

- Source Walkthrough

- Source verification (as of 2026-05-01)

- Beyond CUBRID — Comparative Designs & Research Frontiers

- Sources

Theoretical Background

Section titled “Theoretical Background”A relational engine that wants to outlive its process needs a storage substrate cheaper than the operating system’s file abstraction at the granularity it cares about. Database Internals (Petrov, Ch. 3 “File Formats”) frames this gap directly: the OS hands the engine byte streams in files, but the engine wants fixed-size pages organized into per-table or per-index files, with hooks to grow on demand and to reclaim space without paying syscall cost on every page touch. Every disk-resident DBMS therefore re-implements a small file system on top of the OS one.

The textbook account names three pieces such a system needs:

- A page identifier scheme. The smallest I/O unit is a fixed-size

page (4 KB to 64 KB depending on engine). The engine assigns each

page a stable identifier so that index entries, log records, and

the buffer manager can name pages without re-deriving the OS-level

offset every time. The identifier usually decomposes into “which

container” + “which page inside it”; PostgreSQL writes

(relfilenode, blocknumber), Oracle writes a DBA (file number + block number), CUBRID writesVPID = (volid, pageid). - An allocation unit larger than the page. Allocating one page at a time to a relation produces unbounded fragmentation as the relation grows. The textbook fix is the extent: a contiguous run of N pages reserved at once, owned by exactly one relation, tracked in a per-container bitmap. Oracle calls them extents, InnoDB calls them extents (1 MB = 64 × 16 KB), SQL Server calls them uniform extents (8 × 8 KB = 64 KB), CUBRID calls them sectors (64 × 16 KB = 1 MB by default).

- A growth strategy. A relation that fills its current container must either grow that container or claim a new one. The strategy has to be crash-safe (a partially-grown container after crash must not look full) and concurrent (one growing transaction must not block readers of unrelated tables on the same volume). ARIES-style nested top-actions (Mohan et al. 1992) are the canonical solution.

Two implementation choices follow from this model and shape every on-disk engine:

- How the engine splits durable from ephemeral storage. Sort spills, hash partitions, and intermediate query results need pages but do not need to survive a crash. An engine that routes them through the same machinery as table data pays WAL overhead it does not benefit from. Most engines therefore expose a temporary tablespace distinct from the permanent one.

- Where the per-relation page bitmap lives. PostgreSQL uses a separate FSM fork per relation; InnoDB stores extent descriptors in a tablespace-wide bookkeeping file; CUBRID embeds the bitmap inside the relation (file) itself, in two complementary tables — Partial-Sectors (sectors with at least one free page) and Full-Sectors (sectors with all pages allocated).

This document tracks how CUBRID realizes each piece in

src/storage/disk_manager.{h,c} (volume / sector layer) and

src/storage/file_manager.{h,c} (file / page layer).

Common DBMS Design

Section titled “Common DBMS Design”The textbook gives the model; this section names the engineering

conventions that almost every disk-resident DBMS — PostgreSQL,

Oracle, MySQL InnoDB, SQL Server, CUBRID — adopts in some form.

CUBRID’s specific choices in ## CUBRID's Approach are best read as

one set of dials within this shared design space, not as inventions.

Volume = OS file containing many pages

Section titled “Volume = OS file containing many pages”The lowest layer of the engine treats each backing OS file as a

volume: a contiguous numbered region of fixed-size pages whose

on-disk layout is fully under the engine’s control. Page 0 is a

volume header describing the volume to the engine; some prefix of

the remaining pages is a bitmap tracking which extents are in use;

the rest holds actual data. PostgreSQL uses one OS file per relation

fork; Oracle uses one OS file per data file; InnoDB uses one OS file

per tablespace (or one shared ibdata file in legacy mode); CUBRID

uses one OS file per volume, where one database has at least one

permanent volume and may grow to dozens.

Extent (sector) as the disk-manager allocation unit

Section titled “Extent (sector) as the disk-manager allocation unit”Allocating page-by-page is too fine-grained for the disk manager — every allocation would touch the bitmap, the buffer pool, the WAL. Allocating in extents amortizes the cost. The extent size is a tuning choice: small extents (Oracle’s INITIAL 64K) waste less space on small tables but produce more bitmap entries; large extents (InnoDB’s 1 MB) reduce bitmap traffic but waste more space on tables that fit in one extent. CUBRID picks 64 pages × 16 KB = 1 MB by default.

Logical file as a bundle of extents owned by a relation

Section titled “Logical file as a bundle of extents owned by a relation”The relation-level identity does not match the volume-level identity:

one B-tree may span dozens of sectors scattered across multiple

volumes, and one volume may host hundreds of relations. The standard

fix is a logical file layer: each relation owns a numbered file,

the file owns a list of reserved sectors, and the sectors belong to

one or more volumes. PostgreSQL’s relfilenode, Oracle’s segment,

InnoDB’s per-table .ibd, and CUBRID’s VFID = (volid, fileid) all

serve this role. The identifier names the first page of the file’s

header so the file manager can locate its own metadata in O(1).

Permanent vs temporary purpose split

Section titled “Permanent vs temporary purpose split”A query that spills a sort run wants a page to write to but does not

want a WAL record for every byte. Engines split storage into

permanent (logged, durable) and temporary (unlogged,

crash-discarded) purposes. Oracle has PERMANENT and TEMPORARY

tablespaces; PostgreSQL has temp_tablespaces; InnoDB has the

innodb_temp_data_file_path; CUBRID has the same two-purpose split

plus an unusual third option — a permanent volume used for

temporary data, which the user can pre-allocate via addvoldb to

reserve disk space for sorts without auto-growing into a temporary

volume.

Two-step allocation — cache reserve, then bitmap commit

Section titled “Two-step allocation — cache reserve, then bitmap commit”The naive allocation loop walks each volume’s bitmap to find a free

extent, sets the bit, and returns the extent. Under concurrency this

produces three problems: walking many bitmaps is slow, two

transactions can race for the same bit, and a bitmap miss may force a

volume extension that takes hundreds of milliseconds. The standard

fix is to keep an in-memory free-space cache per volume and to

reserve from the cache (a short critical section over scalar fields)

before committing the reservation to the bitmap. CUBRID names this

the Disk Cache and runs the two-step protocol explicitly:

disk_reserve_from_cache (Step 1) decides which volume gives how

many sectors; disk_reserve_sectors_in_volume (Step 2) walks the

sector table and flips the bits.

Per-file extent table split into Partial and Full

Section titled “Per-file extent table split into Partial and Full”Within a file, the manager has to decide which sectors still have

free pages (next allocation is cheap) versus which sectors are fully

used (no point looking). Almost every engine maintains the split.

Oracle writes segment header pages with separate FREELIST groups;

InnoDB writes XDES extent descriptor pages with state bits; CUBRID

maintains two extensible-data tables embedded in the file itself —

Partial Sectors Table (entries are (VSID, page bitmap)) and

Full Sectors Table (entries are bare VSIDs). Allocation always

takes from the head of Partial; when a sector becomes full the

entry is moved to Full; deallocation moves it back.

Numerable file for ordered access

Section titled “Numerable file for ordered access”Some workloads need pages in allocation order, not in disk order:

external sort wants the i-th page of a sort run to land on the i-th

write, regardless of which sector it physically came from. Engines

support this through an extra index on the file. PostgreSQL’s

tuplestore keeps a per-tuple position; CUBRID exposes it as a file

flag (FILE_FLAG_NUMERABLE) and adds a third table —

User Page Table (entries are bare VPIDs in allocation order).

Volume extension via nested top-action

Section titled “Volume extension via nested top-action”Growing a volume on disk is a multi-step operation: write the new

header bitmap region, extend the OS file, update the disk cache,

update the volume info file. A crash mid-extension would either

leave dead bytes or, worse, corrupt the cache. The textbook fix

(Liskov & Scheifler 1982; Mohan et al. 1992) is the nested top

action: an inner transaction commits independently of the outer

one, so the extension survives even if the outer transaction

rolls back. CUBRID runs disk_volume_expand and disk_add_volume

inside log_sysop_start / log_sysop_attach_to_outer system

operations.

Theory ↔ CUBRID mapping

Section titled “Theory ↔ CUBRID mapping”The textbook concepts of §“Theoretical Background” map to CUBRID’s

named entities as follows. ## CUBRID's Approach is the slow zoom

into each row.

| Theory | CUBRID name |

|---|---|

| OS file holding the engine’s pages | Volume — DISK_VOLUME_HEADER at page 0 of each OS file |

| Per-volume extent allocation bitmap | Sector Allocation Table (STAB), one bit per sector |

| Extent (allocation unit, > page) | Sector — 64 pages × 16 KB = 1 MB by default (DISK_SECTOR_NPAGES) |

| Page identifier | VPID = (volid, pageid); sector id is VSID = (volid, sectid) |

| Logical relation-level file | VFID = (volid, fileid); fileid is the page id of the file header |

| Permanent / temporary purpose split | DB_VOLPURPOSE ∈ {DB_PERMANENT_DATA_PURPOSE, DB_TEMPORARY_DATA_PURPOSE} |

| Permanent / temporary type split | DB_VOLTYPE ∈ {DB_PERMANENT_VOLTYPE, DB_TEMPORARY_VOLTYPE} |

| In-memory free-space cache | DISK_CACHE (disk_Cache global) |

| Per-purpose extent rollup | DISK_PERM_PURPOSE_INFO / DISK_TEMP_PURPOSE_INFO |

| Two-step allocation | disk_reserve_sectors → disk_reserve_from_cache + disk_reserve_sectors_in_volume |

| Partial / Full extent table per file | Partial Sectors Table + Full Sectors Table (in file_header) |

| Allocation-order index for ordered scans | User Page Table + FILE_FLAG_NUMERABLE |

| Generic page-spanning list | FILE_EXTENSIBLE_DATA (used for all three file tables and for the tracker) |

| Per-database file directory | File Tracker — itself a permanent file, located via boot_Db_parm->trk_vfid |

| Volume extension as nested top action | disk_extend → disk_volume_expand / disk_add_volume inside log_sysop_start |

| Per-transaction temporary file recycling | FILE_TEMPCACHE with cached and free lists |

CUBRID’s Approach

Section titled “CUBRID’s Approach”CUBRID instantiates the conventions above with two layered managers

sharing a hard contract: the disk manager owns volumes and

sectors, the file manager owns files and pages, and the line

between them is exactly disk_reserve_sectors. The distinguishing

choices are: (1) 64-page sectors as the only allocation unit

crossing the disk/file boundary — the file manager never asks for

“a page on volume V”; it asks for “N sectors anywhere of purpose P”;

(2) a two-step reservation protocol with the Disk Cache

serializing the cheap part (extent counting) and pushing the

expensive part (bitmap rewrite) outside the global mutex; (3) an

explicit permanent-vs-temporary split inside the cache so that

sort spills do not contend with table writes for the same extent

counter; (4) a three-table file layout (Partial, Full, optional

User Page) that keeps “where can I allocate next” in O(1) for the

common case; (5) volume extension as a nested top action so a

running transaction can grow the database without a cascade rollback

risk.

flowchart TB

classDef vol fill:#e0e7ff,stroke:#3730a3,color:#111

classDef sect fill:#fde68a,stroke:#b45309,color:#111

classDef pg fill:#bbf7d0,stroke:#166534,color:#111

classDef file fill:#fecaca,stroke:#991b1b,color:#111

subgraph PHYS["Physical hierarchy (disk manager)"]

direction TB

VOL["volume = one OS file"]:::vol

SEC["sector = 64 contiguous pages<br/>VSID = (volid, sectid)"]:::sect

PG["page = 16 KB<br/>VPID = (volid, pageid)"]:::pg

VOL -->|"contains"| SEC

SEC -->|"contains 64"| PG

end

subgraph LOG["Logical view (file manager)"]

FL["file = set of sectors<br/>VFID = (volid, fileid)<br/>(may span volumes)"]:::file

end

FL -.reserves.-> SEC

Figure 1 — The four-level hierarchy. A volume is one OS file

divided into fixed-size pages (16 KB by default); the disk

manager bundles 64 contiguous pages into one sector. A

file is a logical set of sectors — possibly drawn from many

volumes — owned by one relation (heap, index, catalog, …). Index

entries and the buffer pool name pages by VPID = (volid, pageid),

the disk-manager bitmap names sectors by VSID = (volid, sectid),

and the file manager names files by VFID = (volid, fileid). The

file manager hands out pages inside sectors it has reserved;

the disk manager hands out sectors inside volumes it has

formatted. (Source: DFM1 deck, slide 2.)

How a page-allocation request flows end-to-end

Section titled “How a page-allocation request flows end-to-end”sequenceDiagram

participant C as Caller (heap, btree, …)

participant FM as file_alloc

participant FH as file header page

participant DM as disk_reserve_sectors

participant DC as Disk Cache

participant ST as Sector Table (on-disk)

participant FIO as fileio (OS)

C->>FM: file_alloc(vfid, f_init)

FM->>FH: fix file header, read n_page_free

alt n_page_free > 0

FM->>FH: pick first item of Partial Sectors Table, set one bit

FH-->>FM: VPID of allocated page

else need more sectors

FM->>DM: disk_reserve_sectors(purpose, N)

DM->>DC: lock reserve mutex, walk vols[]

alt cache has enough sectors

DC-->>DM: vol/n distribution

else cache short

DM->>DC: lock extend mutex

DC->>FIO: disk_volume_expand / disk_add_volume

FIO-->>DC: new sectors created

DC-->>DM: vol/n distribution

end

DM->>ST: disk_reserve_sectors_in_volume — flip STAB bits

ST-->>DM: VSIDs reserved

DM-->>FM: array of VSIDs

FM->>FH: append to Partial Sectors Table — allocate the page

FH-->>FM: VPID of allocated page

end

FM->>C: page pointer + VPID — f_init initializes the page

The labeled boxes are unpacked in the subsections below.

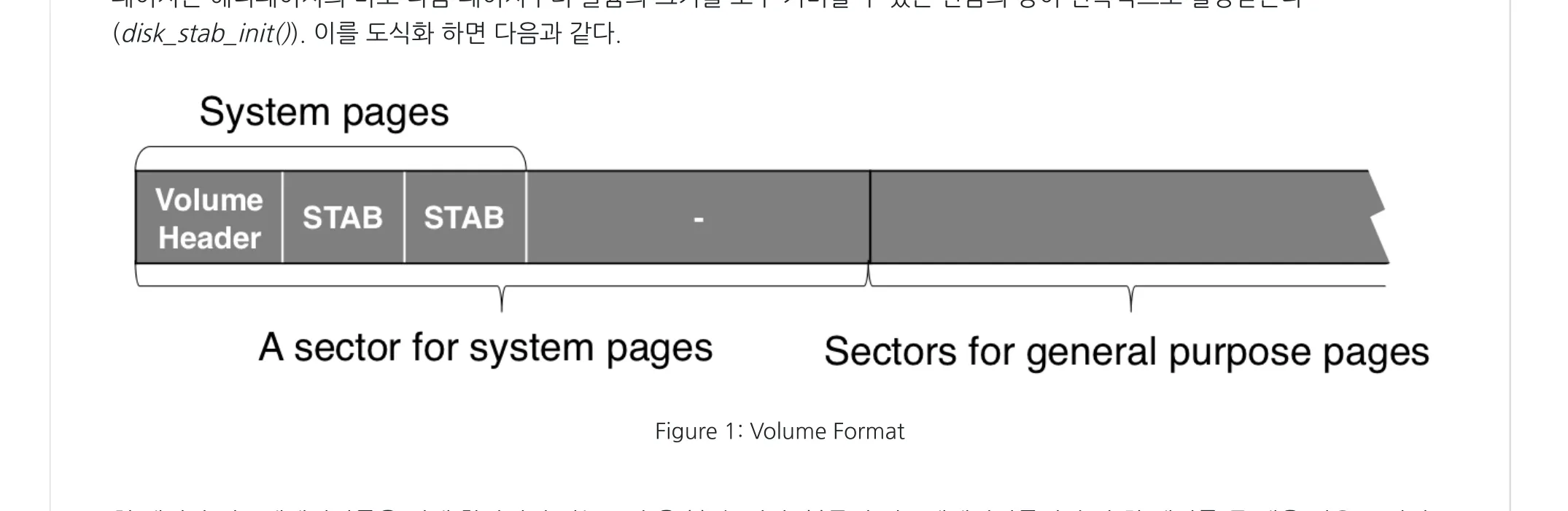

Volume layout — header page + sector allocation table

Section titled “Volume layout — header page + sector allocation table”Every volume is one OS file. Page 0 holds the volume header;

pages 1 through stab_npages hold the sector allocation table

(STAB) — a flat bitmap whose i-th bit indicates whether sector i

is reserved. The remaining pages are organized into sectors and

handed out by the disk manager.

Figure 2 — A volume is an OS file whose first sector is reserved for

system pages: the Volume Header at page 0 and a contiguous run of

STAB pages starting at stab_first_page. Subsequent sectors are

the general purpose sectors that the disk manager hands out via

disk_reserve_sectors. The number of STAB pages is sized at format

time to cover nsect_max (the volume’s growth ceiling), which is why

a freshly-formatted volume can grow without re-laying-out its header.

(Source: DFM2 deck, slide 1.)

The header carries the structural facts (page size, sector size,

volume id, nsect_total, nsect_max) and the recovery fact

(chkpt_lsa, the LSN at which the last successful checkpoint

inspected this volume).

// DISK_VOLUME_HEADER (condensed) — src/storage/disk_manager.cstruct disk_volume_header{ char magic[CUBRID_MAGIC_MAX_LENGTH]; /* "magic" + name for file(1) */ INT16 iopagesize; INT16 volid; INT8 db_charset; DB_VOLPURPOSE purpose; /* permanent or temporary purpose */ DB_VOLTYPE type; /* permanent or temporary type */ DKNPAGES sect_npgs; /* pages per sector (64) */ DKNSECTS nsect_total; /* current sectors */ DKNSECTS nsect_max; /* max growth, set at format */ SECTID hint_allocsect; /* where to start the next bitmap walk */ DKNPAGES stab_npages; /* STAB size in pages */ PAGEID stab_first_page; PAGEID sys_lastpage; /* ... */ LOG_LSA chkpt_lsa; /* recovery anchor */ HFID boot_hfid; /* boot heap (system params) */ /* ... */ INT16 next_volid; /* link to next volume */ /* variable-length region for full-path strings */ char var_fields[1];};The STAB is a bitmap, but the disk manager never reads it bit by

bit. It quantizes the bitmap into units (64-bit words) and walks

units with a disk_stab_iterate_units callback — bit64_count_zeros

on a unit answers “how many free sectors here?” in one instruction,

and disk_stab_unit_reserve flips bits in a hot register rather

than touching memory eight times. The bitmap-as-functor pattern

recurs on the file manager side (file_extdata_apply_funcs).

// DISK_STAB_CURSOR (condensed) — src/storage/disk_manager.ctypedef UINT64 DISK_STAB_UNIT;struct disk_stab_cursor{ const DISK_VOLUME_HEADER *volheader; PAGEID pageid; /* page in the STAB */ int offset_to_unit; /* unit offset in page */ int offset_to_bit; /* bit offset in unit */ SECTID sectid; PAGE_PTR page; /* fixed STAB page */ DISK_STAB_UNIT *unit; /* pointer to current unit */};Volume types and purposes

Section titled “Volume types and purposes”Two attributes classify a volume independently:

- Type (

DB_VOLTYPE) — what the volume itself is. ADB_PERMANENT_VOLTYPEvolume survives server restart; aDB_TEMPORARY_VOLTYPEvolume is unlinked on startup. - Purpose (

DB_VOLPURPOSE) — what data lives in it. ADB_PERMANENT_DATA_PURPOSEvolume holds tables, indexes, system data; aDB_TEMPORARY_DATA_PURPOSEvolume holds sort spills, hash partitions, query results.

Three of the four combinations exist:

| Type \ Purpose | DB_PERMANENT_DATA_PURPOSE | DB_TEMPORARY_DATA_PURPOSE |

|---|---|---|

DB_PERMANENT_VOLTYPE | usual table / index volume | user-pre-allocated addvoldb --purpose temp volume — survives restart, but holds temporary data |

DB_TEMPORARY_VOLTYPE | (does not exist) | auto-created sort spill volume — discarded on restart |

The third combination — permanent type + temporary purpose — exists

specifically so a DBA can pre-allocate a known amount of disk for

sorts without inviting the runtime to grow temporary volumes

unpredictably. When a temporary-purpose request comes in,

disk_reserve_from_cache tries the permanent-type-temp-purpose

volumes first (they cost nothing extra to keep) and falls back to

extending or adding a temporary-type volume only if those are full.

Disk Cache — in-memory free-space rollup

Section titled “Disk Cache — in-memory free-space rollup”The Disk Cache is one global struct. It carries (a) per-volume free counts and (b) two rollup structs, one per purpose, that summarize all the volumes of that purpose plus the parameters needed to grow them.

// DISK_CACHE / DISK_EXTEND_INFO (condensed) — src/storage/disk_manager.cstruct disk_cache{ int nvols_perm; int nvols_temp; DISK_CACHE_VOLINFO vols[LOG_MAX_DBVOLID + 1]; /* per-volume free counts */

DISK_PERM_PURPOSE_INFO perm_purpose_info; /* rollup for permanent purpose */ DISK_TEMP_PURPOSE_INFO temp_purpose_info; /* rollup for temporary purpose */

pthread_mutex_t mutex_extend; /* serializes volume growth */};

struct disk_extend_info{ volatile DKNSECTS nsect_free; /* total free sectors of this purpose */ volatile DKNSECTS nsect_total; volatile DKNSECTS nsect_max; /* growth ceiling across all volumes */ volatile DKNSECTS nsect_intention; /* requested-but-unsatisfied counter */

pthread_mutex_t mutex_reserve; /* short critical section */

DKNSECTS nsect_vol_max; /* per-volume growth cap */ VOLID volid_extend; /* the only volume that may auto-grow */ DB_VOLTYPE voltype;};DISK_TEMP_PURPOSE_INFO adds two more counters

(nsect_perm_total, nsect_perm_free) that track the

permanent-type-temp-purpose volumes separately so the reservation

code can prefer them.

The Disk Cache is the only structure that needs an in-memory

mutex on the hot path. The two-step protocol is what makes that

mutex short: Step 1 only mutates scalars under

mutex_reserve; Step 2 walks the on-disk bitmap with the

mutex not held, because Step 1 already proved the sectors are

reservable for this caller and decremented the counters

accordingly.

Two-step sector reservation

Section titled “Two-step sector reservation”// disk_reserve_sectors (sketch) — src/storage/disk_manager.cintdisk_reserve_sectors (THREAD_ENTRY *thread_p, DB_VOLPURPOSE purpose, VOLID volid_hint, int n_sectors, VSID *reserved_sectors){ DISK_RESERVE_CONTEXT context; /* ... condensed: arg checking, sysop start ... */

/* init context: the running ledger of "how many sectors I still need" */ context.nsect_total = n_sectors; context.n_cache_reserve_remaining = n_sectors; context.vsidp = reserved_sectors; /* OUT: sorted list of VSIDs */ context.n_cache_vol_reserve = 0; context.purpose = purpose;

/* STEP 1: pre-reserve in cache (may extend volumes) */ error_code = disk_reserve_from_cache (thread_p, &context, &did_extend); if (error_code != NO_ERROR) goto error;

/* STEP 2: commit to bitmap, one volume at a time */ for (iter = 0; iter < context.n_cache_vol_reserve; iter++) { error_code = disk_reserve_sectors_in_volume (thread_p, iter, &context); if (error_code != NO_ERROR) goto error; } /* ... condensed: sysop attach to outer, return ... */}The DISK_RESERVE_CONTEXT carries the running ledger between Step

1 and Step 2: per-volume reservation counts (cache_vol_reserve[],

filled by Step 1, drained by Step 2) and a remaining-sectors

counter that hits zero exactly when Step 1 finishes. Step 2’s

volume walks are independent — each one consults the on-disk STAB

of one volume and sets exactly the bits Step 1 promised it would.

A failure inside Step 2 is the awkward case (the counters in the

cache have already moved): the rollback path uses

disk_unreserve_ordered_sectors_without_csect to put the bits and

the cache back together.

Volume extension via nested top action

Section titled “Volume extension via nested top action”Step 1 may discover that the cache is short — even after considering

permanent-type-temp-purpose volumes for a temp request. It then

takes the extend mutex, double-checks the free count (a window where

another thread may have grown the volume already), and calls

disk_extend.

flowchart LR

classDef perm fill:#dbeafe,stroke:#1e40af,color:#111

classDef temp fill:#fee2e2,stroke:#991b1b,color:#111

classDef noext fill:#f3f4f6,stroke:#374151,color:#374151,stroke-dasharray:4 3

classDef ext fill:#dcfce7,stroke:#166534,color:#111

PR["permanent-purpose<br/>request"]:::perm

TR["temporary-purpose<br/>request"]:::temp

subgraph PERM_INFO["permanent extend-info rollup"]

PP["permanent-type<br/>permanent-purpose volumes"]:::ext

end

subgraph TEMP_INFO["temporary extend-info rollup"]

direction TB

PT["permanent-type temporary-purpose<br/>(DBA pre-allocated, no auto-extend)"]:::noext

TT["temporary-type<br/>temporary-purpose volumes"]:::ext

end

PR -->|"extend / add"| PP

TR -->|"1. check first"| PT

PT -->|"2. fall through"| TT

TR -.->|"3. extend / add"| TT

Figure 3 — How extension is routed by purpose. The disk cache holds two extend-info rollups, one per purpose. A permanent-purpose request that runs out of space extends or adds permanent-type permanent-purpose volumes. A temporary-purpose request that runs out of space first checks the permanent-type temporary-purpose volumes (DBA-pre-allocated), falls through to the temporary-type temporary-purpose rollup, and ultimately extends or adds temporary-type volumes. The permanent-type-temp-purpose row is not subject to auto-extend — it is whatever the user pre-allocated and no more. (Source: DFM4 deck, slide 1.)

disk_extend runs two policies in order:

- Expand the last-added volume up to its

nsect_vol_max(disk_volume_expand). Only one volume per purpose has space to grow at any time — the cache fieldvolid_extendnames it. Expanding before adding minimizes the number of OS files the engine creates. - Add a new volume when the last one is at its ceiling

(

disk_add_volume). This callsdisk_formatto write a new header + STAB + initial pages to a new OS file and then plumbs it intoboot_Db_parm, the_vinfregistry, and the cache.

Both steps run inside log_sysop_start / log_sysop_attach_to_outer,

making them nested top actions — they commit independently of

the outer transaction even on rollback. The reasoning is necessity:

the moment a transaction has touched a sector inside a freshly-grown

volume, every other transaction that also uses that sector would

have to roll back if the grower itself rolls back; nested top

action breaks the dependency.

// disk_extend (condensed) — src/storage/disk_manager.cstatic intdisk_extend (THREAD_ENTRY *thread_p, DISK_EXTEND_INFO *extend_info, DISK_RESERVE_CONTEXT *reserve_context){ /* what is the desired remaining free after expand? */ target_free = MAX ((DKNSECTS) (total * 0.01), DISK_MIN_VOLUME_SECTS); /* expand at least enough to cover the unsatisfied intention */ nsect_extend = MAX (target_free - free, 0) + intention; if (nsect_extend <= 0) return NO_ERROR;

if (total < max) { /* first expand last volume up to its capacity */ to_expand = MIN (nsect_extend, max - total); log_sysop_start (thread_p); error_code = disk_volume_expand (thread_p, extend_info->volid_extend, voltype, to_expand, &nsect_free_new); /* ... condensed: pre-reserve from the new sectors, sysop attach ... */ }

/* if still short, add new volumes */ while (nsect_extend > 0) { log_sysop_start (thread_p); error_code = disk_add_volume (thread_p, &volext, &volid_new, &nsect_free_new); /* ... condensed: pre-reserve, sysop attach ... */ } return NO_ERROR;}The expand-first-then-add policy is deliberate: it concentrates

data within a small set of OS files, reducing both the

open(2) traffic of restart and the number of headers the

backup tools have to read.

From sectors to a file — file_create

Section titled “From sectors to a file — file_create”Once disk_reserve_sectors returns N sorted VSIDs, the file

manager turns them into a real file. file_create does five

things:

- Estimate the total sector count (data + worst-case file tables)

and call

disk_reserve_sectors. - Pick one of the reserved sectors’ first page as the file

header page — its VPID becomes the file’s

VFID. - Initialize the file header (

FILE_HEADER) with the per-file metadata: file type, descriptors, tablespace policy, page / sector counters. - Write the three file tables (Partial Sectors, Full Sectors,

User Page if numerable) into the file header page, starting

at offsets

offset_to_partial_ftab,offset_to_full_ftab,offset_to_user_page_ftab. Tables overflow into separately- allocated FTAB pages viaFILE_EXTENSIBLE_DATAlinks. - Register the file with the File Tracker (permanent files only) so the rest of the engine can enumerate files of a given type.

// FILE_HEADER (condensed) — src/storage/file_manager.cstruct file_header{ INT64 time_creation; VFID self; /* (volid, fileid = header page id) */ FILE_TABLESPACE tablespace; /* growth policy */ FILE_DESCRIPTORS descriptor; /* per-type descriptor (heap, btree, …) */

int n_page_total, n_page_user, n_page_ftab, n_page_free; int n_page_mark_delete;

int n_sector_total, n_sector_partial, n_sector_full, n_sector_empty;

FILE_TYPE type; /* FILE_HEAP / FILE_BTREE / FILE_TEMP / … */ INT32 file_flags; /* FILE_FLAG_NUMERABLE | _TEMPORARY | … */

VOLID volid_last_expand; INT16 offset_to_partial_ftab; INT16 offset_to_full_ftab; INT16 offset_to_user_page_ftab;

VPID vpid_sticky_first; /* survives dealloc — file-type-specific root */

/* temporary-file optimisation: cursor into Partial Sectors Table */ VPID vpid_last_temp_alloc; int offset_to_last_temp_alloc;

/* numerable-file optimisations */ VPID vpid_last_user_page_ftab; VPID vpid_find_nth_last; int first_index_find_nth_last; /* ... reserved fields ... */};File architecture — three tables atop one bitmap

Section titled “File architecture — three tables atop one bitmap”The file’s metadata divides into system pages (the file header page plus any FTAB-overflow pages) and user pages (the actual heap rows / btree nodes / catalog records).

flowchart TB

classDef fileA fill:#fecaca,stroke:#991b1b,color:#111

classDef fileB fill:#bfdbfe,stroke:#1e40af,color:#111

classDef fileC fill:#fde68a,stroke:#b45309,color:#111

classDef free fill:#ffffff,stroke:#9ca3af,color:#374151

classDef tab fill:#e9d5ff,stroke:#6b21a8,color:#111

subgraph V1["volume V1"]

direction LR

V1P1["A"]:::fileA

V1P2["B"]:::fileB

V1P3["A"]:::fileA

V1P4["·"]:::free

V1P5["C"]:::fileC

V1P6["B"]:::fileB

end

subgraph V2["volume V2"]

direction LR

V2P1["·"]:::free

V2P2["A"]:::fileA

V2P3["C"]:::fileC

V2P4["A"]:::fileA

V2P5["B"]:::fileB

V2P6["·"]:::free

end

subgraph FA["file A — three-table view (in file header page)"]

direction TB

TAB_PART["Partial Sectors Table"]:::tab

TAB_FULL["Full Sectors Table"]:::tab

TAB_USER["User Page Table (numerable only)"]:::tab

end

FA -.indexes.-> V1P1

FA -.indexes.-> V1P3

FA -.indexes.-> V2P2

FA -.indexes.-> V2P4

Figure 4 — Volumes vs files. Each volume (gray) holds a contiguous run of pages; the disk manager stamps reservations on those pages for multiple files at once (red, blue, …). One file’s reserved pages are not contiguous — they are scattered across whichever sectors the disk manager could find. The file manager hides this scatter by giving the file an in-memory table view of its sectors, so callers ask for “the next page in file F” without ever seeing volume-level layout. (Source: DFM5 deck, slide 1.)

Inside the file, three tables index those sectors and pages:

- Partial Sectors Table — entries are

FILE_PARTIAL_SECTOR = (VSID, FILE_ALLOC_BITMAP)where the bitmap is a 64-bit word, one bit per page in the sector. A sector lives here as long as at least one page in it is free. - Full Sectors Table — entries are bare VSIDs. A sector moves here when its bitmap is all-1s; it moves back to Partial when a page is deallocated.

- User Page Table (numerable files only) — entries are bare

VPIDs in allocation order. Used by

file_numerable_find_nthfor external sort.

All three tables are instances of FILE_EXTENSIBLE_DATA, the

generic page-spanning singly-linked list that the file manager (and

the file tracker) reuses for every “list of variably many items

across pages” need.

// FILE_EXTENSIBLE_DATA (condensed) — src/storage/file_manager.cstruct file_extensible_data{ VPID vpid_next; /* next page or VPID_NULL */ INT16 max_size; /* component capacity in bytes */ INT16 size_of_item; INT16 n_items;};Items follow the header in the same page, sized by size_of_item.

Operations include file_extdata_apply_funcs (the file-side analog

of disk_stab_iterate_units — apply f_extdata to each component

page and f_item to each item). Searches across an ordered table use

binary search within a component and linear chase across components.

File-table layout differs by file type

Section titled “File-table layout differs by file type”The three tables share the file header page but in different proportions depending on (a) whether the file is permanent or temporary and (b) whether it is numerable.

| File type | Partial Sectors | Full Sectors | User Page Table |

|---|---|---|---|

| Permanent, non-numerable | 1/2 | 1/2 | — |

| Permanent, numerable | 1/3 | 1/3 | 1/3 |

| Temporary, non-numerable | full header | — | — |

| Temporary, numerable | 1/2 | — | 1/2 |

Figure 5 — Four flavors of the file header page. Permanent

non-numerable files split the header page evenly between Partial

and Full Sectors Tables (no User Page Table). Permanent

numerable files give one third each to Partial / Full / User

Page. Temporary non-numerable files use only a Partial Sectors

Table — temporary files never deallocate a page, so a sector never

leaves Partial. Temporary numerable files split between Partial

and User Page. The numbers are the relative byte budgets of each

table inside the header page; once a table fills, it overflows to a

fresh FTAB-allocation page reachable via FILE_EXTENSIBLE_DATA.vpid_next.

(Source: DFM6 deck, slide 1.)

Page allocation — permanent files

Section titled “Page allocation — permanent files”Permanent-file allocation walks the Partial Sectors Table head:

// file_perm_alloc (high-level sketch) — src/storage/file_manager.c//// 1. If fhead->n_page_free == 0, call file_perm_expand:// reserve more sectors via disk_reserve_sectors and// append the resulting partial-sector entries to the table.// 2. If the in-header Partial Sectors Table is empty, drag entries// from the next FTAB page into the header// (file_table_move_partial_sectors_to_header), so the head item// is always in the file header page itself.// 3. The first item of Partial Sectors Table is, by invariant, a// sector with at least one free page. Set one bit in its bitmap// via file_partsec_alloc; that bit's offset gives the page id.// 4. If the bitmap is now all-1s, move the entry to the Full// Sectors Table (and possibly free the page that used to host// the next-Partial-table component).// 5. If the file is numerable, append the new page's VPID to the// User Page Table.Two invariants make the head-of-table-only access safe:

- The head sector always has at least one free page — guaranteed by step 4: the moment a sector fills, it leaves Partial.

- The header always has at least one Partial entry when

n_page_free > 0— guaranteed by step 2: when the in-header Partial table empties but a next-page Partial table exists, its entries are dragged in.

Page allocation — temporary files

Section titled “Page allocation — temporary files”Temporary files do not deallocate, so:

- They keep only a Partial Sectors Table (no Full table).

- Allocation is sequential: the file header caches

(vpid_last_temp_alloc, offset_to_last_temp_alloc)— the page and offset into the Partial table where the next sector to draw from lives. Eachfile_temp_allocadvances the cursor. - Bookkeeping is therefore O(1) per allocation with no logging.

The expensive Partial-Full migration and the WAL-record stream that permanent files pay for are entirely absent.

Page deallocation

Section titled “Page deallocation”Page deallocation is the inverse of permanent allocation, with two twists:

- Postpone via

log_append_postpone. The bit is not actually flipped at the moment offile_dealloc; it is staged as a postponed log record and replayed when the transaction commits. The reasoning is exactly the reservation-side reasoning: a released page must not be reused by another transaction until the releasing one is durable. - Buffer-pool eviction hint. After deallocation,

pgbuf_dealloc_pageresets the page type toPAGE_UNKNOWNand pushes the BCB into LRU 3 so the buffer manager reuses it quickly. (Seecubrid-page-buffer-manager.md§“Zones — five-state classification”.)

File destruction and the File Tracker

Section titled “File destruction and the File Tracker”file_destroy walks the Partial and Full tables to collect every

VSID the file owns, walks all FTAB and user pages to evict them

from the buffer pool, and finally calls

disk_unreserve_ordered_sectors to give the sectors back to the

disk manager. Like deallocation, destruction is postponed via

log_append_postpone and runs at commit time.

The File Tracker is one permanent file per database whose body

is one giant FILE_EXTENSIBLE_DATA whose items are

FILE_TRACK_ITEM = (volid, fileid, type, metadata). Every

permanent-file create registers; every permanent-file destroy

deregisters. The tracker’s location is itself stored in

boot_Db_parm->trk_vfid, and on server restart

file_Tracker_vpid is loaded into a global so the tracker can be

found in O(1). Callers use file_tracker_map /

file_tracker_interruptable_iterate to enumerate files of a given

type — heap-file reuse, vacuum’s dropped-files scan, and

backup all start there.

Temporary file cache

Section titled “Temporary file cache”Temporary files turn over fast — every sort of every query

allocates and abandons one. Recreating the file every time is the

naive policy; CUBRID’s FILE_TEMPCACHE keeps a free-list of

recently-retired temporary files, indexed separately for numerable

and non-numerable variants (their header layouts differ).

States:

- Create — first checks the cached list; on miss creates a fresh file and links it to the current transaction’s tempcache entry list.

- Retire (transaction end) — at

log_commit_local/log_abort_local, the per-transaction list is drained: each file gets its user pages reset and goes into the cached list (or is destroyed if the cache is full). - Preserve — a query manager that wants a temp file to outlive

its creating transaction calls

file_temp_preserve; the file leaves the per-transaction list and goes onto a global “kept by query manager” list. - Retire (preserved) — query manager destroys preserved files

via

file_temp_retire_preservedonce the result set is done.

Source Walkthrough

Section titled “Source Walkthrough”Anchor on symbol names, not line numbers. The CUBRID source moves; a function name (or struct/enum tag) is the stable handle. Use

git grep -n '<symbol>' src/storage/to locate the current position. The line numbers in the position-hint table at the end of this section were observed when the document was lastupdated:and are intended only as quick hints.

Type definitions — disk side (src/storage/disk_manager.{h,c})

Section titled “Type definitions — disk side (src/storage/disk_manager.{h,c})”struct disk_volume_header(indisk_manager.c) — on-disk volume header; magic, ids, sector counts, STAB offsets, checkpoint LSA.enum DB_VOLPURPOSE(storage_common.h) —PERMANENT,TEMPORARY,UNKNOWN.enum DB_VOLTYPE—PERMANENT_VOLTYPE,TEMPORARY_VOLTYPE.struct disk_cache(indisk_manager.c) —disk_Cache, the global free-space rollup.struct disk_extend_info— per-purpose extension counters and mutex.struct disk_perm_info/struct disk_temp_info— purpose-tagged wrappers arounddisk_extend_info; the temp variant addsnsect_perm_total/nsect_perm_freefor permanent-type-temp-purpose volumes.struct disk_cache_volinfo—(purpose, nsect_free)per volume.struct disk_reserve_context(indisk_manager.c) — the running ledger between Step 1 and Step 2.struct disk_cache_vol_reserve—(volid, nsect)rows that Step 2 walks.typedef DISK_STAB_UNIT—UINT64, the bitmap manipulation unit.struct disk_stab_cursor— bitmap walk handle.

Type definitions — file side (src/storage/file_manager.{h,c})

Section titled “Type definitions — file side (src/storage/file_manager.{h,c})”enum FILE_TYPE(file_manager.h) — 14 file types (FILE_TRACKER/FILE_HEAP/FILE_HEAP_REUSE_SLOTS/FILE_BTREE/FILE_BTREE_OVERFLOW_KEY/FILE_EXTENDIBLE_HASH/FILE_EXTENDIBLE_HASH_DIRECTORY/FILE_CATALOG/FILE_DROPPED_FILES/FILE_VACUUM_DATA/FILE_QUERY_AREA/FILE_TEMP/FILE_MULTIPAGE_OBJECT_HEAP/FILE_UNKNOWN_TYPE).struct file_header(infile_manager.c) — per-file metadata.struct file_tablespace(file_manager.h) — initial size + expansion policy (expand_ratio,expand_min_size,expand_max_size).union file_descriptors— type-tagged per-file descriptor (heap, btree, etc.); fixed 64 bytes for ABI compatibility.struct file_partial_sector—(VSID, FILE_ALLOC_BITMAP), 64-bit bitmap.typedef FILE_ALLOC_BITMAP—UINT64.struct file_extensible_data(infile_manager.c) — generic page-spanning list header.- File flags —

FILE_FLAG_NUMERABLE,FILE_FLAG_TEMPORARY,FILE_FLAG_ENCRYPTED_AES,FILE_FLAG_ENCRYPTED_ARIA.

Lifecycle (disk_manager.c / file_manager.c)

Section titled “Lifecycle (disk_manager.c / file_manager.c)”disk_manager_init/disk_manager_final— module init / teardown; loaddisk_Cachefrom on-disk volume info.disk_cache_init/disk_cache_final/disk_cache_load_all_volumes/disk_cache_load_volume— cache rebuild.file_manager_init/file_manager_final.disk_format_first_volume— initial databasecreatedb.disk_format— write a fresh volume’s header + STAB.disk_unformat— remove a volume’s OS file (database delete path).

Sector reservation (disk_manager.c)

Section titled “Sector reservation (disk_manager.c)”disk_reserve_sectors— public entry; outer driver of the two-step protocol, runs inside a system operation.disk_reserve_from_cache— Step 1; consults the cache, callsdisk_extendif short.disk_reserve_from_cache_vols— iterate volumes, picking reservation candidates.disk_reserve_from_cache_volume— pre-reserve from one volume (decrements counters, fillscache_vol_reserve[]).disk_reserve_sectors_in_volume— Step 2; flips bits in the on-disk STAB.disk_unreserve_ordered_sectors/disk_unreserve_ordered_sectors_without_csect— release path (called byfile_destroy).

Volume extension (disk_manager.c)

Section titled “Volume extension (disk_manager.c)”disk_extend— extension router; expand-then-add.disk_volume_expand— grow last volume up to itsnsect_max.disk_add_volume— create a fresh OS file viadisk_formatand link it into the cache.disk_add_volume_extension— public entry foraddvoldband for the boot-time pre-allocation path.

STAB iteration (disk_manager.c)

Section titled “STAB iteration (disk_manager.c)”disk_stab_iterate_units— per-unit map; user suppliesDISK_STAB_UNIT_FUNC.disk_stab_unit_reserve/disk_stab_unit_unreserve— bit-flip primitives.disk_stab_count_free— count zero bits in a unit (used by the consistency check and by recovery’sdisk_rv_volhead_extend_redo).disk_stab_cursor_set_at_sectid/disk_stab_cursor_set_at_start/disk_stab_cursor_set_at_end— cursor positioning.

File create / destroy / track (file_manager.c)

Section titled “File create / destroy / track (file_manager.c)”file_create— generic create; takesFILE_TYPE,tablespace, numerable flag.file_create_heap/file_create_temp/file_create_temp_numerable/file_create_query_area/file_create_ehash/file_create_ehash_dir— type-specific wrappers.file_destroy/file_postpone_destroy— destruction (postponed to commit).file_temp_retire/file_temp_retire_preserved/file_temp_preserve/file_temp_truncate— temporary file lifecycle.file_tracker_create/file_tracker_load/file_tracker_register/file_tracker_unregister/file_tracker_map/file_tracker_interruptable_iterate— the per-database file directory.

Page allocation / deallocation (file_manager.c)

Section titled “Page allocation / deallocation (file_manager.c)”file_alloc— public entry; dispatches byFILE_IS_TEMPORARY.file_perm_alloc— permanent-file allocation walking the head of the Partial Sectors Table.file_temp_alloc— temporary-file allocation advancing the cursor in the file header.file_perm_expand— when out of free pages, calldisk_reserve_sectorsand append to Partial.file_table_move_partial_sectors_to_header— pull entries from next-page Partial into the header so the head item invariant holds.file_partsec_alloc— flip one bit in aFILE_PARTIAL_SECTORbitmap, returning the page offset.file_perm_dealloc— flip the bit back, possibly move from Full to Partial.file_dealloc/file_rv_dealloc_on_postpone— postponed deallocation.file_alloc_sticky_first_page/file_get_sticky_first_page— file-type-specific root page that survives all dealloc paths.

Numerable file (file_manager.c)

Section titled “Numerable file (file_manager.c)”file_numerable_add_page— append to User Page Table on alloc.file_numerable_find_nth— find the n-th allocated user page; uses(vpid_find_nth_last, first_index_find_nth_last)cache.file_numerable_truncate— drop the tail of User Page Table.

Extensible data primitives (file_manager.c)

Section titled “Extensible data primitives (file_manager.c)”file_extdata_init/file_extdata_max_size/file_extdata_size/file_extdata_is_full/file_extdata_item_count/file_extdata_remaining_capacity— inline accessors.file_extdata_append/file_extdata_insert_at/file_extdata_remove_at/file_extdata_merge— mutators.file_extdata_apply_funcs— page-and-item map.file_extdata_func_for_search_ordered/file_extdata_item_func_for_search— binary search hooks.

Temporary file cache (file_manager.c)

Section titled “Temporary file cache (file_manager.c)”file_tempcache_get/file_tempcache_put— obtain or release a cached temp file.file_tempcache_drop_tran_temp_files— called atlog_commit_local/log_abort_local.file_get_tran_num_temp_files— debug / monitoring.

Recovery (disk_manager.c + file_manager.c)

Section titled “Recovery (disk_manager.c + file_manager.c)”disk_rv_redo_dboutside_newvol/disk_rv_undo_format/disk_rv_redo_format/disk_rv_redo_volume_expand/disk_rv_volhead_extend_redo/disk_rv_volhead_extend_undo— volume create/extend recovery.disk_rv_reserve_sectors/disk_rv_unreserve_sectors— STAB-bit recovery.disk_rv_undoredo_link— multi-volume linkage recovery.file_rv_destroy/file_rv_perm_expand_redo/file_rv_perm_expand_undo/file_rv_partsec_set/file_rv_partsec_clear/file_rv_extdata_set_next/file_rv_extdata_add/file_rv_extdata_remove/file_rv_extdata_merge/file_rv_fhead_alloc/file_rv_fhead_dealloc/file_rv_dealloc_on_undo/file_rv_dealloc_on_postpone/file_rv_user_page_mark_delete/file_rv_user_page_unmark_delete_logical/file_rv_user_page_unmark_delete_physical/file_rv_tracker_unregister_undo/file_rv_tracker_mark_heap_deleted/file_rv_tracker_mark_heap_deleted_compensate_or_run_postpone/file_rv_tracker_reuse_heap— file-side recovery.

Position hints as of this revision

Section titled “Position hints as of this revision”These line numbers held when the document was last updated:. If

you land at a different definition, the symbol name above is

authoritative; update the table on your way through. disk_manager.c

is ≈ 6 100 lines and file_manager.c is ≈ 11 000 lines; symbol-level

git grep is the recommended lookup.

| Symbol | File | Line |

|---|---|---|

struct disk_volume_header | disk_manager.c | 75 |

struct disk_cache | disk_manager.c | 194 |

struct disk_extend_info | disk_manager.c | 162 |

struct disk_perm_info | disk_manager.c | 180 |

struct disk_temp_info | disk_manager.c | 186 |

struct disk_stab_cursor | disk_manager.c | 229 |

struct disk_reserve_context | disk_manager.c | 281 |

disk_format | disk_manager.c | 512 |

disk_extend | disk_manager.c | 1633 |

disk_volume_expand | disk_manager.c | 1904 |

disk_add_volume | disk_manager.c | 2117 |

disk_add_volume_extension | disk_manager.c | 2326 |

disk_cache_load_volume | disk_manager.c | 2567 |

disk_cache_init | disk_manager.c | 2627 |

disk_cache_load_all_volumes | disk_manager.c | 2714 |

disk_stab_iterate_units | disk_manager.c | 3665 |

disk_reserve_sectors_in_volume | disk_manager.c | 4066 |

disk_reserve_sectors | disk_manager.c | 4290 |

disk_reserve_from_cache | disk_manager.c | 4463 |

disk_reserve_from_cache_vols | disk_manager.c | 4612 |

disk_reserve_from_cache_volume | disk_manager.c | 4666 |

disk_unreserve_ordered_sectors | disk_manager.c | 4703 |

disk_format_first_volume | disk_manager.c | 5062 |

enum FILE_TYPE | file_manager.h | 38 |

struct file_tablespace | file_manager.h | 142 |

struct file_partial_sector | file_manager.h | 161 |

struct file_header | file_manager.c | 89 |

struct file_extensible_data | file_manager.c | 231 |

file_extdata_apply_funcs | file_manager.c | 1886 |

file_create | file_manager.c | 3311 |

file_destroy | file_manager.c | 4121 |

file_perm_expand | file_manager.c | 4644 |

file_table_move_partial_sectors_to_header | file_manager.c | 4772 |

file_perm_alloc | file_manager.c | 5166 |

Source verification (as of 2026-05-01)

Section titled “Source verification (as of 2026-05-01)”Each entry is a fact about the current source — readable without the original analysis materials. The trailing note shows how it was checked and, where relevant, historical drift or limits of verification. Open questions follow as the curator’s recorded gaps; future readers should treat them as starting points, not as known bugs.

Verified facts

Section titled “Verified facts”-

A sector is exactly 64 pages.

DISK_SECTOR_NPAGESis a compile-time constant; with the default 16 KB page that yields a 1 MB sector. Verified throughDISK_SECTS_NPAGESand the invariants used indisk_reserve_sectors_in_volumeon 2026-05-01. Not a runtime parameter — a build-time change would require log format compatibility analysis. -

STAB units are unconditionally 64-bit.

DISK_STAB_UNITisUINT64; the comment indisk_manager.cnotes that changing the type “should be handled automatically” but the consumer side hard-codes 64-bit operations (bit64_count_zeros,disk_stab_unit_*). Verified on 2026-05-01. The DFM2 deck flagged the same hidden coupling — still present. -

Sector reservation is two-step with one shared mutex per purpose.

disk_reserve_from_cacheacquiresextend_info->mutex_reserve, walksdisk_Cache->vols[], decrementsnsect_freecounters, and releases. Step 2 (disk_reserve_sectors_in_volume) runs without the cache mutex — only the on-disk STAB page latch protects it. Verified by readingdisk_reserve_sectorson 2026-05-01. -

The cache and the STAB can be transiently out of sync. During the gap between Step 1 and Step 2, the cache shows a sector as reserved and the bitmap still shows it as free. This is safe because (a) Step 1 holds the

cache_vol_reserve[]ledger so the bit is implicitly owned by the calling context, and (b) the reservation order is alwayscache → bitmapwhile the release order isbitmap → cache, so the cache always undercounts free sectors and never overcounts. Verified by readingdisk_reserve_sectors_in_volumeanddisk_unreserve_sectors_from_volumeon 2026-05-01. The DFM3 deck spelled this out explicitly — still holds. -

Volume extension is a nested top action.

disk_extendwraps bothdisk_volume_expandanddisk_add_volumeinlog_sysop_start/log_sysop_attach_to_outer. Verified 2026-05-01. -

Last-volume-only auto-extend.

extend_info->volid_extendnames the one volume per purpose that is allowed to grow; new volumes are only added once that volume hitsnsect_vol_max. Verified indisk_extendanddisk_add_volumeon 2026-05-01. -

Permanent-type / temporary-purpose volumes are user-only.

addvoldb --purpose tempis the only path that creates them; the auto-extension code path (disk_extendfortemp_purpose_info) extends or adds temporary-type volumes only. Verified by readingdisk_reserve_from_cache‘s preference fornsect_perm_free > 0before falling through to the temporary-type rollup. -

Sector deallocation is postponed for permanent purposes only.

disk_unreserve_ordered_sectorscallslog_append_postponefor permanent purposes; temporary-purpose unreserve runs immediately. Verified on 2026-05-01. Rationale: a committed transaction must not see released sectors taken by another transaction mid-commit; for temp data nothing logs. -

disk_Cachepage-table size isLOG_MAX_DBVOLID + 1slots.vols[]instruct disk_cacheis sized byLOG_MAX_DBVOLID, the same constant the log manager uses for volume IDs. A volume count beyond this would also break the log. Verified on 2026-05-01. -

vpid_sticky_firstis not deallocated on user-page release.file_alloc_sticky_first_pagesetsfhead->vpid_sticky_firstandfile_dealloc/file_perm_deallocshort-circuit onvpid_sticky_first. Verified on 2026-05-01. Each file type uses its sticky page for type-specific roots (heap header, btree root, …). -

file_temp_allocadvances a header cursor and never deallocates. Verified by reading the temp-alloc path on 2026-05-01. The DFM6 deck called this out as the reason temporary files have no Full Sectors Table — invariant still holds. -

File Tracker is a permanent file with no special header. Its body is one big

FILE_EXTENSIBLE_DATAofFILE_TRACK_ITEMentries. The tracker’s location is inboot_Db_parm->trk_vfidon disk andfile_Tracker_vpidin memory after restart. Verified by readingfile_tracker_createand the boot path on 2026-05-01.

Open questions

Section titled “Open questions”-

Auto-volume-expansion daemon. The hand-authored

disk_manager.mdreferences adisk_auto_volume_expansion_daemonanddisk_manager_initreadingprm_get_integer_value (PRM_ID_BOSR_MAXTMP_PAGES). As of 2026-05-01 the daemon is not present in the source — neitherdisk_auto_volume_expansion_daemon_initnordisk_auto_volume_expansion_daemon_destroyresolves agit grep. The daemon was removed at some point; investigation: trace the commit that removed it and confirm the auto-extend loop is now carried entirely by foreground reservation paths (which would make thensect_intentionaccumulator the load-bearing piece). -

disk_Temp_max_sectsinteraction with reservation. The global is set fromPRM_ID_BOSR_MAXTMP_PAGESat init and capped toSECTID_MAXif negative. Where exactly does it gate reservation?disk_reserve_from_cachedoes not appear to consult it directly. Investigation:git grep disk_Temp_max_sectsand trace its consumers. -

hint_allocsectactual usefulness.volheader->hint_allocsectis updated bydisk_reserve_sectors_in_volumeto point past the most-recently-allocated VSID, in the hope that the next reservation continues from there. Under high-concurrency workloads with many releases, the hint may be no better than “scan from 0”. Investigation: instrument hit-rate of the hint under TPC-C-like and sort-heavy workloads. -

reserved0..3fields inDISK_VOLUME_HEADERandFILE_HEADER. Both structs reserve fourINT32words for “future extension” — almost certainly some are now consumed by newer features (TDE, OOS). Investigation: check git blame and the OOS feature design doc to see which reserved bits are now live. -

FILE_FLAG_ENCRYPTED_AES/FILE_FLAG_ENCRYPTED_ARIAin relation tovpid_sticky_first. The doc treats encryption as transparent to the disk/file layer; in fact the file flags carry encryption state and the file manager exposesfile_get_tde_algorithm/file_apply_tde_algorithm. The reservation/allocation paths are unaffected, butfile_perm_dealloc’s “zero before release” semantics for encrypted files would be worth verifying. Investigation: tracefile_apply_tde_algorithmconsumers and check theFILE_IS_TDE_ENCRYPTEDbranches. -

What does

file_temp_truncateactually do? The header declares it but thedisk_manager.mdand the DFM6/DFM7 decks do not describe it. Investigation: read the body and find callers in the query manager. -

Concurrent volume formats during recovery. The recovery path uses

disk_rv_redo_dboutside_newvolto recreate volumes that did not exist at the start of recovery — a path that touches the OS. Investigation: trace the redo handler under the assumption of partial mid-disk_formatcrashes (header written, STAB partially written, data not written) and confirm the redo idempotency.

Beyond CUBRID — Comparative Designs & Research Frontiers

Section titled “Beyond CUBRID — Comparative Designs & Research Frontiers”Pointers, not analysis. Each bullet is a starting handle for a follow-up doc; depth here is intentionally shallow.

-

PostgreSQL relfilenode + FSM fork. PostgreSQL uses one OS file per relation fork (

base/<dboid>/<relnode>), with a separate FSM file per relation tracking page-level free space and a VM file tracking visibility. There is no extent layer — allocation is per-page through the storage manager (smgr_extend). CUBRID’s sector + Partial/Full structure is one cohesive answer to what PostgreSQL splits across_fsm,_vm, and the table fork. A side-by-side write-up would frame the trade-off (CUBRID: less metadata, more lock contention; PostgreSQL: more files, less coordination per allocation). -

Oracle tablespaces / segments / extents / blocks. Oracle’s four-level hierarchy maps almost one-to-one with CUBRID’s purpose / file / sector / page, but with two differences: Oracle’s segment header lives at the start of the segment with freelist groups (multiple lists, one per session class) for contention-free allocation, and Oracle has bigfile tablespaces where one tablespace is one segment-of-extents. A comparison with CUBRID’s single Partial Sectors Table head would clarify which workloads each policy optimizes.

-

InnoDB tablespace / file space / extent / page. InnoDB’s

XDESextent-descriptor entries (in segment-inode pages) carry per-page state bits (free / clean / dirty), and segments own inode pages that link to their extents. The segment-inode / file-header / extent-descriptor split is closely analogous to CUBRID’sfile_header/ Partial / Full split. Recommended reading: MySQL InnoDB Storage Engine docs §“InnoDB On-Disk Structures”. -

SQL Server filegroup / file / extent / page. SQL Server’s uniform extents (all 8 pages owned by one object) and mixed extents (pages from up to 8 small objects sharing one extent) trade off small-table density against large-table speed. CUBRID has only the uniform-extent equivalent; “what would mixed extents look like in CUBRID” is a useful thought experiment for workloads with thousands of small tables.

-

NVMe-aware allocation. With 16 KB pages on a device whose internal block is 16 KB or 32 KB, the torn-write risk that the Double Write Buffer (

cubrid-page-buffer-manager.md) defends against partly evaporates. Bittman et al., Don’t Stack Your Log On My Log (USENIX HotStorage 2014), and the LeanStore line (Leis et al., ICDE 2018) re-examine the assumption that the engine should manage allocation at extent granularity at all. Worth re-evaluating CUBRID’s sector size against modern SSDs. -

Persistent memory and zero-copy storage. Engines targeting Optane PMEM (FaceBook RocksDB-PMem, MS FishStore) skip the buffer manager entirely and treat storage as memory; the disk-manager abstraction collapses into the allocator. CUBRID’s volume / file layer is the level that would have to change first if PMEM support were added.

-

Lock-free free-space tracking. Sadoghi et al., LeanStore (ICDE 2018), and the FineLine line of work (Sauer et al., VLDB 2020) maintain extent-level free-space metadata in lock-free structures. CUBRID’s

mutex_reserveper purpose is a known bottleneck on machines with > 32 cores; comparison would quantify the gap. -

Segment-level checkpointing (FineLine). FineLine drops the per-page LSN ordering in favor of segment-level checkpoints, which would reshape

chkpt_lsainDISK_VOLUME_HEADERif CUBRID adopted it. The frontier paper is Sauer et al., FineLine: Log-structured Transactional Storage and Recovery (VLDB 2020).

The intent of this section is to seed next documents, not to analyze. Each bullet should become its own curated note when its turn comes.

Sources

Section titled “Sources”Raw analyses (under raw/code-analysis/cubrid/storage/disk_manager/)

Section titled “Raw analyses (under raw/code-analysis/cubrid/storage/disk_manager/)”DFM1_overview.pdf— terms (volume, sector, file, page), disk-manager / file-manager split, volume types and purposes.DFM2_volume_architectur.pdf— volume header, sector allocation table, STAB unit/cursor iteration pattern.DFM3_sector_reservation.pdf— two-step protocol,DISK_RESERVE_CONTEXT,DISK_CACHE,DISK_EXTEND_INFO, unreserve walk, log_append_postpone hand-off.DFM4_volume_extention.pdf— extension routing by purpose, expand-then-add policy, nested top-action rationale,disk_volume_expand/disk_add_volume/disk_add_volume_extension.DFM5_file_architecture.pdf— file vs volume, file types, page types (FTAB / user / system), numerable property, FILE_HEADER fields.DFM6_page_allocation.pdf— three file tables, FileExtendibleData format and operations, perm vs temp allocation flow, page deallocation.DFM7_file_creation.pdf— file create / destroy, File Tracker, Temp Cache state machine.Disk Manager 1주차 분석 QnA.pdf— clarifications onPRM_ID_BOSR_MAXTMP_PAGES,disk_Temp_max_sects,boot_sr.csemantics.disk_manager_presentation_material.pdf— consolidated presentation deck covering the same seven topics.[코드분석]volume_file.pptx— author’s PPTX with figures reused in this doc’s figure list.disk_manager.md— earlier hand-authored Korean prose (12.5 KB); used as a glossary cross-check.

Notion (CUBRID DEV WIKI)

Section titled “Notion (CUBRID DEV WIKI)”- Storage – Concurrency 코드 분석 — module-level positioning; Disk Manager / File Manager are the substrate that everything else (heap, btree, catalog, log) sits on.

Textbook chapters (under knowledge/research/dbms-general/)

Section titled “Textbook chapters (under knowledge/research/dbms-general/)”- Database Internals (Petrov), Ch. 1 “Introduction and Overview” — page-organized storage and the buffer/storage line.

- Database Internals (Petrov), Ch. 3 “File Formats” — slotted pages, page identifiers, free-space management, segment / extent allocation.

- Database System Concepts (Silberschatz, Korth, Sudarshan, 6th ed.), Ch. 13 “Storage and File Structure” — file organization, fixed-length and variable-length records.

- Mohan et al., ARIES: A Transaction Recovery Method (TODS 1992)

— nested top action, the basis for

log_sysop_*. - Liskov & Scheifler, Guardians and Actions (POPL 1982) — earlier formal account of nested top action.

CUBRID source (under /data/hgryoo/references/cubrid/)

Section titled “CUBRID source (under /data/hgryoo/references/cubrid/)”src/storage/disk_manager.hsrc/storage/disk_manager.csrc/storage/file_manager.hsrc/storage/file_manager.csrc/storage/storage_common.h(DB_VOLPURPOSE / DB_VOLTYPE / VFID / VPID / VSID)src/transaction/log_manager.h(log_sysop_start/log_append_postponereferenced from this document)